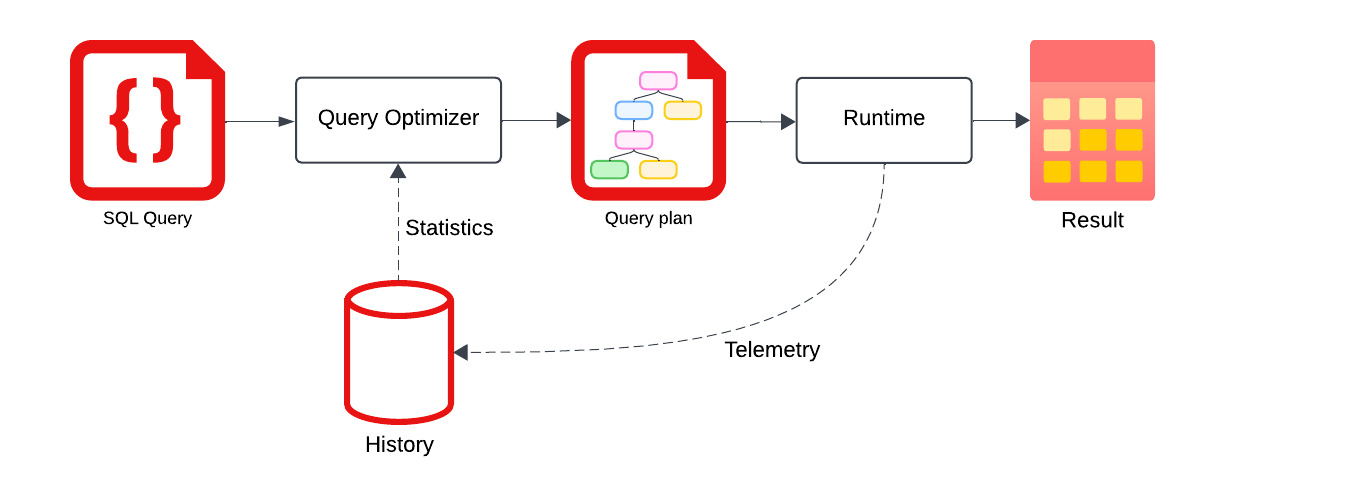

Good query plans depend on the planner knowing about the data in your tables. Firebolt offers two ways of collecting statistics about your data, Automated column statistics and History-based statistics. Automated column statistics deliver transactionally consistent information but their collection affects insert/update performance. History-based statistics use machine learning to derive information from past query executions. These offer a more holistic view on the plans, with statistics not only for the base tables but also intermediate results. Both statistics sources have their own strengths and weaknesses, and you can combine them based on your workload. This page describes how to collect history-based statistics and how the optimizer uses them during planning.Documentation Index

Fetch the complete documentation index at: https://docs.firebolt.io/llms.txt

Use this file to discover all available pages before exploring further.

Overview

History-based statistics (HBS) is a feature that enables Firebolt’s query optimizer to derive information from past query executions. With HBS enabled, you can record a snapshot considting of cardinality information from past query executions and estimation models learned from these examples. This results in:- Better query plans - The planner can choose more optimal join orders, aggregation strategies, and execution paths

- Improved performance - Statistical information helps estimate cardinalities more accurately

- Minimal impact on query planning times - Statistics are cached in memory for fast access to reduce impact on query planning times to a minimum (usually only a few microseconds)

Data Collection

In order to start collecting data from a clean training state, we recommend creating or starting up a fresh dedicated engine. At the moment, we recommend using an engine with 1 cluster and 1 node. Now you can send representative queries to that engine, and mark them to be collected as training data. If you are using the Firebolt UI, set the session-level setting as_hbs_training_data by runningSET as_hbs_training_data = true;.

If you run the queries from an SDK or via a client library, pass as_hbs_training_data = true for each query (see System settings).

Send a variety of queries that cover your workload so the model has enough training data and does not overfit.

information_schema.engine_query_history table.

Note that the training data is not persisted, so if you restart the engine, all training data accumulated so far will be lost.

Snapshot Creation

Once you have collected enough training data, create a snapshot so it can be used for query planning.CALL CREATE_HBS_OBJECT()

fb_catalog.public.history_based_statistics table:

- Specific join cardinality examples remembering the actual row count from past queries for the same join structure.

- Specific plan cardinality examples remembering the actual row count from past queries for the exact same subplan.

- QuickSel model to learn the data distribution of filter selectivities.

Statistics Inference

To use a snapshot for query planning, set it per query with the hbs_object_id setting:Cache Behavior

Collected statistics are stored in thefb_catalog database, but to reduce planning time, they are cached in memory.

You can monitor the cache in information_schema.engine_caches (filter by type = 'history_based_statistics'),

and clear it with CLEAR HISTORY BASED STATISTICS CACHE.

Related Topics

- CLEAR CACHE - clear caches, including the one for HBS

- information_schema.engine_caches - inspect the HBS in-memory cache

- Cardinality Estimation - how statistics affect query planning

- Inspecting Query Plans - analyze query execution plans

- System settings - full reference (including as_hbs_training_data and hbs_object_id)